Go to documentation repository

Page History

| Tip |

|---|

Video stream and scene requirements for the Object Presence DetectionNeural classifier |

To configure the Object Presence Detection Neural classifier, do the following:

- Go to the Detection Tools

- Detectors tab.

- Below the required camera, click Create… → Category: Production Safety → Object Presence Detection

- Neural classifier.

By default, the detection tool detector is enabled and set to detect objects in the frame.

If necessary, you can change the detection tool detector parameters. The list of parameters is given in the table:

| Parameter | Value | Description | |||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Object features | |||||||||||||||||||||||

| Record mask to archive | Yes | By default, the sensitivity scale of the detection tool detector is recorded to the archive (see Displaying information from a detection tool detector (mask)). To disable the parameter, select the No value | |||||||||||||||||||||

| No | |||||||||||||||||||||||

| Video stream | Main stream | If the camera supports multistreaming, select the stream for which detection is needed. Selecting a low-quality video stream reduces the load on the Serverserver | |||||||||||||||||||||

| Other | |||||||||||||||||||||||

| Enable | Yes | The detection tool detector is enabled by default. To disable the detection tooldetector, select the the No value | |||||||||||||||||||||

| No | |||||||||||||||||||||||

| NameObject Presence Detection | Neural classifier | Enter the detection tool detector name or leave the default name | |||||||||||||||||||||

| Decoder mode | Auto | Select a processing resource for decoding video streams. When you select a GPU, a stand-alone graphics card takes priority (when decoding with NVIDIANvidia NVDEC chips). If there is no appropriate GPU, the decoding will use the Intel Quick Sync Video technology. Otherwise, CPU resources will beare used for decoding | |||||||||||||||||||||

| CPU | |||||||||||||||||||||||

| GPU | |||||||||||||||||||||||

| HuaweiNPU | |||||||||||||||||||||||

| Number of frames processed per second | 0.1 | Specify the number of frames that the detection tool detector will process per second. The value must be in the range range [0.016; , 100] | |||||||||||||||||||||

| Selected object classes | If necessary, specify the class of the detected object. If you want to display tracks of several classes, specify them separated by a comma with a space. For example, 1, 10.

| ||||||||||||||||||||||

| TypeObject Presence Detection | Neural classifier | Name of the detection tool detector type (non-editable field) | |||||||||||||||||||||

| Advanced settings | |||||||||||||||||||||||

| Neural network file | Specify the pathto the neural network file

| ||||||||||||||||||||||

| Number of measurements in a row to trigger detection | 5 | Specify the minimum number of frames on which the detection tool detector must detect an object to generate an event. The value must be in the range [5; , 20] | |||||||||||||||||||||

| Scanning mode | Yes | The parameter is disabled by default. To detect objects without changing the frame size, select the Yes value. To work in the scanning mode, the neural network must support the scanning mode | |||||||||||||||||||||

| No | |||||||||||||||||||||||

| Basic settings | |||||||||||||||||||||||

| Mode | CPU | Select a processor for the neural network operation (see Hardware requirements for neural analytics operation, Selecting Nvidia GPU when configuring detectors).

| may

| on NVIDIA GPU

| other

| will carry the

| However

| will

| be

| detection tool

| |||||||||||||

| Nvidia GPU 0 | |||||||||||||||||||||||

| Nvidia GPU 1 | |||||||||||||||||||||||

| Nvidia GPU 2 | |||||||||||||||||||||||

| Nvidia GPU 3 | |||||||||||||||||||||||

| Intel GPU | |||||||||||||||||||||||

| Intel NCS (not supported) | |||||||||||||||||||||||

| Intel Multi-GPU | |||||||||||||||||||||||

| Intel GPU 0 | |||||||||||||||||||||||

| Intel GPU 1 | |||||||||||||||||||||||

| Intel GPU 2 | |||||||||||||||||||||||

| Intel GPU 3 | |||||||||||||||||||||||

| Intel HDDL (not supported) | |||||||||||||||||||||||

| Huawei NPU | |||||||||||||||||||||||

| Sensitivity | 33 | Specify the sensitivity of the detector empirically. The value must be in the range [1 , 99]. The preview window displays the sensitivity scale of the detector that relates to the sensitivity parameter. If the scale is green, the object isn't detected. If the scale is yellow, an object is detected, but not enough to generate an event. If the scale is red, an object is detected, and the detector will generate an event if the scale is red through the sampling period (50 seconds by default).

| |||||||||||||||||||||

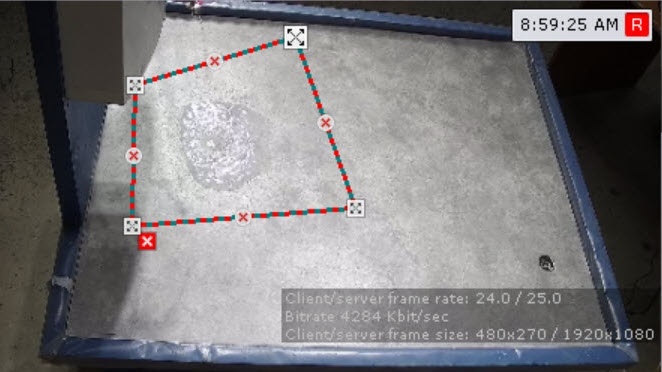

By default, the entire frame is a detection area. In the preview window, you can specify the detection areas using the anchor points (see Configuring a detection area):

- Right-click in the preview window.

- If you want to specify the detection area by one or more rectangles, select Detection area (rectangle). If you specify a rectangular area, the detection tool

- detector will analyze only this area. The rest of the frame will be

- is ignored.

- If you want to specify the detection area by one or more polygons, select Detection area (polygon). If you specify one or several polygonal areas, the detection tool

- detector will analyze the entire frame. The part of the frame not included in the specified polygons will be

- is blacked out.

Note title Attention! You must select the detection area (polygon or rectangle) experimentally. For some neural networks, the quality of detection will be better with a rectangle, for

others—with others—with a polygon.

| Info | ||

|---|---|---|

| ||

|

To save the parameters of the detection tooldetector, click the Apply button. To cancel the changes, click the Cancel button.

Configuring the Neural classifier is complete.